Google [Odds Are, It’s Wrong] for a look into how science fails to face the shortcomings of statistics. It reminds me of chapter 71’s, Not to know yet to think that one knows will lead to difficulty. The patient search for truth pales next to our hunger for the answer, or any answer. Science is humanity’s best attempt to counteract this urge, but it can fail as this article points out. This is especially true for the softer sciences, e.g., sociology, economics, medicine. The eye-opening information in this report helps remind me that we are animals first, and whatever else we think or wish we were a distant second. Here is an excerpt:

Google [Odds Are, It’s Wrong] for a look into how science fails to face the shortcomings of statistics. It reminds me of chapter 71’s, Not to know yet to think that one knows will lead to difficulty. The patient search for truth pales next to our hunger for the answer, or any answer. Science is humanity’s best attempt to counteract this urge, but it can fail as this article points out. This is especially true for the softer sciences, e.g., sociology, economics, medicine. The eye-opening information in this report helps remind me that we are animals first, and whatever else we think or wish we were a distant second. Here is an excerpt:

Especially since the days of Galileo and Newton, math has nurtured science. Rigorous mathematical methods have secured science’s fidelity to fact and conferred a timeless reliability to its findings.

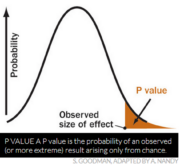

During the past century, though, a mutant form of math has deflected science’s heart from the modes of calculation that had long served so faithfully. Science was seduced by statistics, the math rooted in the same principles that guarantee profits for Las Vegas casinos. Supposedly, the proper use of statistics makes relying on scientific results a safe bet. But in practice, widespread misuse of statistical methods makes science more like a crapshoot.

It’s science’s dirtiest secret: The “scientific method” of testing hypotheses by statistical analysis stands on a flimsy foundation. Statistical tests are supposed to guide scientists in judging whether an experimental result reflects some real effect or is merely a random fluke, but the standard methods mix mutually inconsistent philosophies and offer no meaningful basis for making such decisions. Even when performed correctly, statistical tests are widely misunderstood and frequently misinterpreted. As a result, countless conclusions in the scientific literature are erroneous, and tests of medical dangers or treatments are often contradictory and confusing.

Replicating a result helps establish its validity more securely, but the common tactic of combining numerous studies into one analysis, while sound in principle, is seldom conducted properly in practice.

Experts in the math of probability and statistics are well aware of these problems and have for decades expressed concern about them in major journals. Over the years, hundreds of published papers have warned that science’s love affair with statistics has spawned countless illegitimate findings. In fact, if you believe what you read in the scientific literature, you shouldn’t believe what you read in the scientific literature.

Wow! Experts in statistics have been sounding the alarm for decades. Alas, like balancing the government’s budget, preparing for disaster in general or global warming in particular, the expert’s warnings will go unheeded until some awful visitation descends upon them (i.e., the population), as chapter 72 cautions.

As with much in life, science will always be two- steps forward, one-step backwards. Consider the following quotes from experts in the field.

“Despite the awesome pre-eminence this method has attained … it is based upon a fundamental misunderstanding of the nature of rational inference, and is seldom if ever appropriate to the aims of scientific research.” —William Rozeboom, 1960

“Huge sums of money are spent annually on research that is seriously flawed through the use of inappropriate designs, unrepresentative samples, small samples, incorrect methods of analysis, and faulty interpretation.” – D.G.Altman, 1994

“Many investigators do not know what our most cherished, and ubiquitous, research desideratum—’statistical significance’—really means. This … signals an educational failure of the first order.” – Raymond Hubbard and J. Scott Armstrong, 2006

“These classical methods [of significance testing] are in fact intellectually quite indefensible and do not deserve their social success.”- Colin Howson and Peter

Urbach, 2006

“A finding of ‘statistical’ significance … is on its own almost valueless, a meaning-less parlor game.“ – Stephen Ziliak and Deirdre McCloskey, 2008

“The methods of statistical inference in current use … have contributed to a widespread misperception … that statistical methods can provide a num‑ber that by itself reflects a probability of reaching erroneous conclusions. This belief has damaged the quality of scientific reasoning and discourse.” – Steven Goodman, 1999

“What used to be called judgment is now called prejudice, and what used to be called prejudice is now called a null hypoth-esis…. It is dangerous nonsense.” – A, W F. Edwards, 1972